Carrots and sticks: How new challenges are bringing fresh opportunities for HPC, data analytics, and AI

This Cabot Partners article sponsored by IBM was published at HPCwire at this URL. This article is identical, but graphics are presented in higher resolution.

————-

For decades, banks have relied on high-performance computing (HPC). When it comes to problems too hard to solve deterministically (like predicting market movements), Monte Carlo simulation is the only game in town.

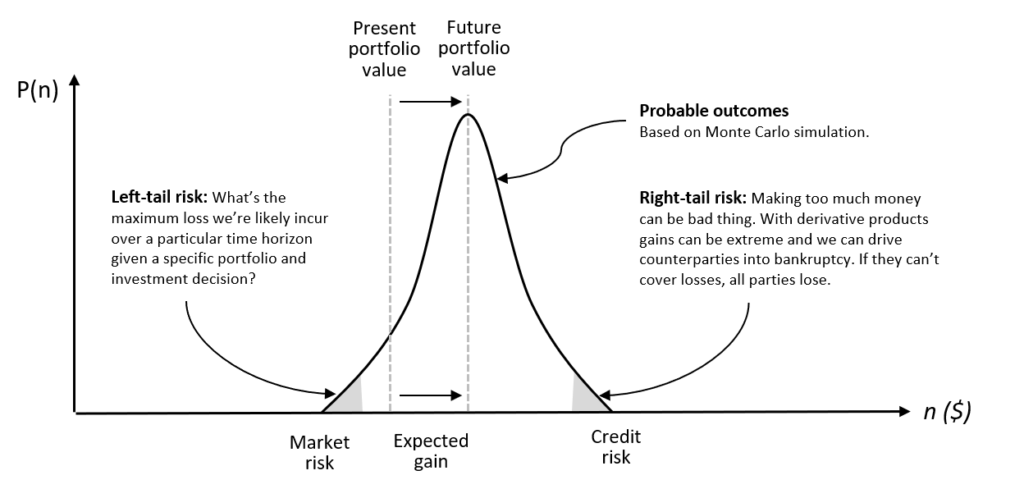

Banks use proprietary pricing models to calculate the future value of various financial instruments. They generate thousands of scenarios (essentially randomized vectors of self-consistent risk factors), compute the value of each instrument across every scenario (often over multiple time steps) and roll-up the portfolio value for each scenario. The result is a probability distribution showing the range of probable outcomes. The more sophisticated the models, and the more scenarios considered, the higher the confidence in the predicted result.

Analysts often focus on value-at-risk (VaR) or left-tail risk referring to the left side of the curve representing worst-case market scenarios. Risk managers literally take these results to the bank relying on computer simulation to help grow portfolio values and manage risk.

Accuracy and speed provide a competitive edge

In the game of financial risk, both speed and accuracy matter. Banks need to maximize gains while maintaining capital reserves adequate to cover losses in worst-case scenarios. Nobody wants cash sitting idle, so accurately assessing VaR helps banks quantify needed reserves, maximize capital deployed, and boost profits.

Simulation is used for dozens of financial applications including model back-testing, stress-testing, new product development, and developing algorithms for high-frequency trading (HFT). Firms with more capable HPC can run deeper analysis faster, bid more aggressively, and model more scenarios pre-trade to make faster better-informed decisions.

While traditional HPC is often about raw compute capacity (modeling a vehicle collision in software for example), financial simulation demands both capacity and timeliness. Reflecting this need for urgency, vendors have responded with specialized, service-oriented grid software purpose-built for low-latency pricing calculations. State-of-the-art middleware can bring thousands of computing cores to bear on a parallel problem almost instantly with sub-millisecond overhead. As financial products grow in complexity, banks increasingly compete on the agility, capacity, and efficiency of their HPC infrastructure.

Post-2008 the plot thickens

Much has been written about the financial crisis of 2008, but an obvious consequence for banks has been an increase in regulation. 2008 served to highlight systemic vulnerabilities to credit risk, liquidity risk, and swap markets trading derivative products.

While these risks were already known, the crisis re-enforced that in addition to left-tail risk (the probability of market losses), firms also needed to emphasize right-tail risk (or credit risk), when paper gains become so large that counterparties are forced into insolvency (potentially leading to cascading bankruptcies). Faced with unpopular government bailouts, politicians and regulatory bodies unleashed a torrent of regulation including Basel III, Dodd-Frank, CRD IV and CRR, Solvency II all aimed at avoiding a repeat of the crisis.

Modeling Counterparty Credit Risk (CCR) is much harder than market risk, so banks were again forced to re-tool, investing in systems to calculate new metrics like Credit Value Adjustments (CVA), a fair-value adjustment to the price derivatives taking CCR into account.

The latest round of regulation affecting banks is Full-Review of Trading Book (FRTB), the regulation published by the Basel Committee on Banking Supervision expected to go fully into effect by 2022. FRTB will require banks to adopt Standard Approaches (SA) and compute and report on a variety of new metrics further increasing infrastructure requirements and compliance costs.

As new workloads emerge, banks become software shops

As if banks didn’t have enough on their plate already, competitive pressures are forcing them to invest in new capabilities for reasons unrelated to regulation. Big data environments are used for a variety of applications in investment and retail banking including cultivating loyalty, reducing churn, boosting cyber-security, and making better decisions when extending credit. Banks increasingly resemble software companies, employing hundreds of developers as software becomes ever more critical to competitiveness.

Faced with competition from alt-lenders and online start-ups, financial firms are racing to leverage Artificial Intelligence (AI) to improve service delivery and reduce costs. Today AI is being used in robo-advisors, improved fraud detection, and applications like loan and insurance underwriting. New predictive models rely on machine learning to supplement traditional predictive methods and enable better quality decisions. Applications on the horizon include automating customer service with chatbots, automating sales recommendations, and leveraging AI for deeper analysis of big data sources like news feeds to improve decision quality further.

An abundance of frameworks compounds infrastructure challenges

Banks face both challenges and opportunities. On the one hand, competitive pressures and new regulations are forcing banks to make new investments in systems and software; on the other hand, advances in technologies like AI, cloud computing, and container technology promise to reduce cost, improve agility, and boost competitiveness.

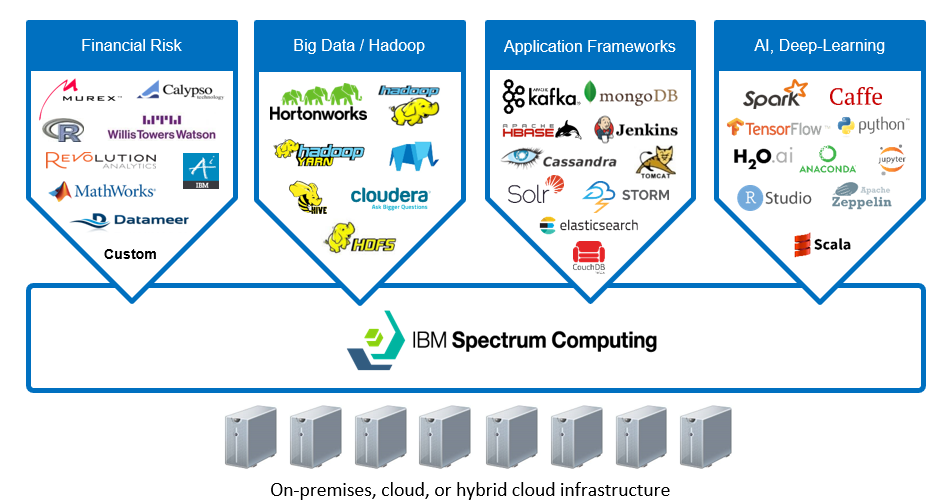

As new capabilities are added, legacy systems don’t go away, so it’s imperative that the high-performance infrastructure supports multiple software frameworks. Banks need to run not only core risk analytics, but big data (Hadoop and Spark), streaming analytics, and scalable software environments for training and deploying deep learning models. In the age of big data, information increasingly resides in distributed, scaled-out systems including not only HDFS and HBase but distributed caches, object stores, and NoSQL stores like Cassandra MongoDB.

Beyond simply considering where to run these applications (on-premises, in public clouds or both) the real challenge is the diversity of frameworks. Adding more compute capacity or additional siloed systems is not the answer. Banks need solutions that will provide flexibility and help them operate scaled-out high-performance environments more efficiently.

A shared infrastructure for high-performance financial workloads

As banks grapple with new regulation and embrace new technologies to deliver services more efficiently, many are seeing this as an opportunity to re-think their infrastructure. Production-proven in the world’s leading investment banks, IBM Spectrum Computing is a proven solution for accelerating and simplifying the full-range of high-performance financial applications including AI, big data, and risk analytics. Spectrum Computing can help banks seamlessly and efficiently share infrastructure resources on-premises or on their preferred cloud platform with minimal disruption to existing systems.

To learn how you can simplify and consolidate application environments, and build a future-proof, cloud-agnostic IT infrastructure, download IBM’s whitepaper Modernizing your Financial Risk Infrastructure with IBM Spectrum Computing.